Tidy Text, Tokenization & Term Frequency

Text Mining Module 1: A Code Along

Welcome to the Text Mining Code Along for Module 1

The Text Mining course is designed for those seeking an introductory understanding of quantifying the text in documents to better understand their properties.

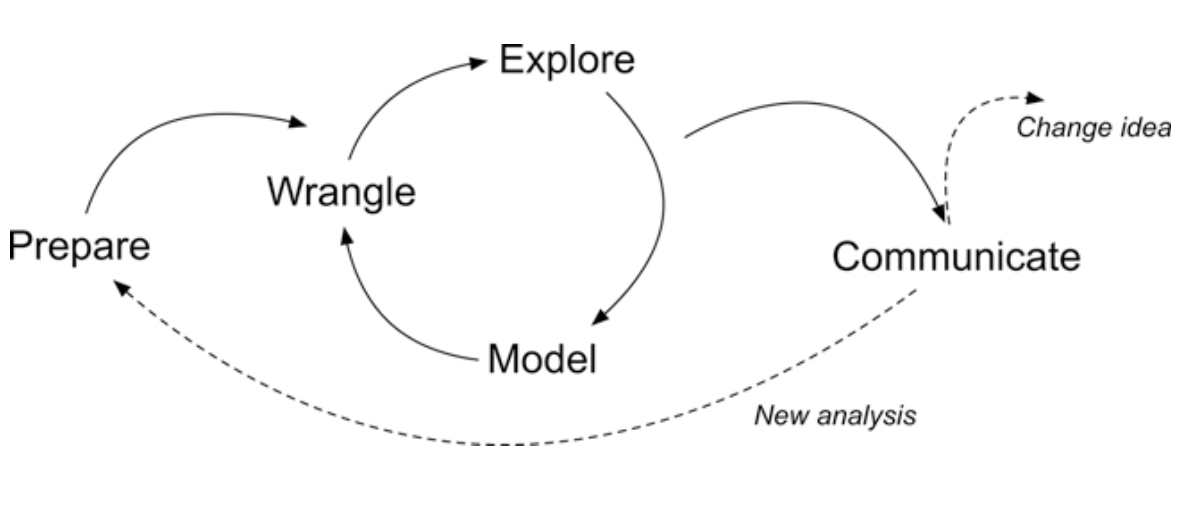

The following Code Along is a companion to the Module 1 case study’s Prepare and Wrangle stages.

Figure 2.2 Steps of Data-Intensive Research Workflow

[@krumm2018]

Module Objectives

This code along is about getting our text “tidy.” By the end of this module we will:

- Understand the context and data sources you’re working with so you can formulate useful and answerable questions.

- Practice reading, manipulating, cleaning, transforming, and merging text data.

Context of the Problem

We are working with open-ended survey data from an evaluation of online professional development offered by the North Carolina Department of Public Instruction.

This PD is part of the state’s Race to the Top (RttT) efforts.

Research Questions:

What aspects of online professional development offerings do teachers find most valuable?

How might resources differ in the value they afford teachers?

Load Libraries

- Load the

tidyverseandtidytextpackages usinglibrary()

Wrangling Text Data

- Data wrangling is essential because it involves the initial steps of going from raw data to a dataset that can be explored and modeled [@krumm2018]. Text mining is no exception. Today we will:

- Read raw data. Before working with data, we need to “read” it into R. It also helps to inspect your data and we’ll review different functions for doing so.

- Reduce the data. We focus on tools from the

dplyrpackage toview,rename,select,slice, andfilterour data in preparation for analysis. - Tidy text. We’ll learn how to use the

tidytextpackage to both “tidy” and tokenize our text in order to create a data frame to use for analysis.

Reading Data

This section introduces the following functions for reading data into R and inspecting the contents of a data frame:

dplyr::read_csv()Reading .csv files into R.base::print()View your data frame in the Console Paneutils::view()View your data frame in the Source Panetibble::glimpse()Like print, but transposed so you can see all columnsutils::head()View the first 6 rows of your data.utils::tail()View last 6 rows of your data.dplyr::write_csv()writing .csv files to directory.

Reading Data, Cont.

To get started, we need to import, or “read”, our data into R

The RttT Online PD survey data is stored in a CSV file named opd_survey.csv which is located in the data folder of this project

Use

read_csv()to saveopd_survey.csvas a new objectopd_surveyThis file can be found in the local data folder

If you have questions about the above function, don’t be afraid to look it up in console using

?read_csv()

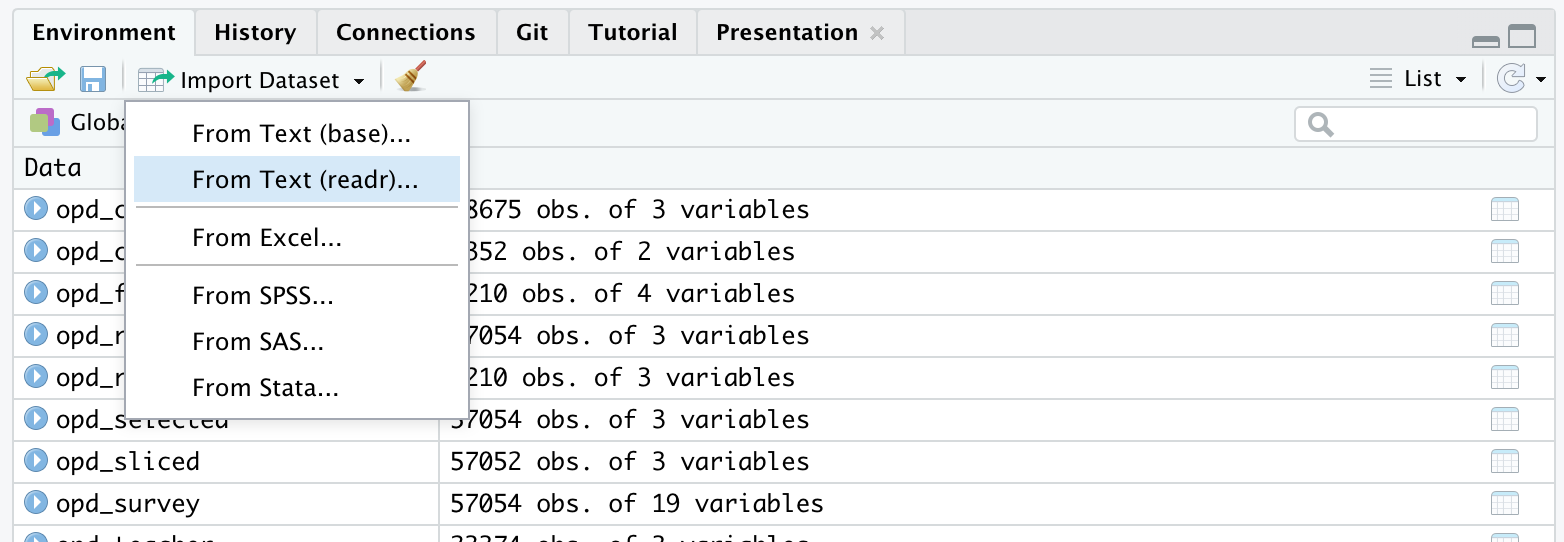

- There is also an Import Dataset feature under the Environment tab in the upper right pane of RStudio

Viewing Data

- Once your data is in R, there are many different ways you can view it. Use the below code chunk to try each way out:

# A tibble: 57,054 × 19

RecordedDate ResponseId Role Q14 Q16...5 Resource Resource_8_TEXT

<chr> <chr> <chr> <chr> <chr> <chr> <chr>

1 "Recorded Date" "Response… "Wha… "Ple… "Which… "Please… "Please indica…

2 "{\"ImportId\":\"rec… "{\"Impor… "{\"… "{\"… "{\"Im… "{\"Imp… "{\"ImportId\"…

3 "3/14/12 12:41" "R_6fKCyE… "Cen… "K-1… "Eleme… "Summer… <NA>

4 "3/14/12 13:31" "R_09rHle… "Cen… "Ele… "Eleme… "Online… <NA>

5 "3/14/12 14:51" "R_1BlKt2… "Sch… "Hig… "Not A… "Online… <NA>

6 "3/14/12 15:02" "R_bPGUVT… "Sch… "Ele… "Guida… "Calend… <NA>

7 "3/14/12 17:24" "R_egJEHM… "Tea… "Ele… "Engli… "Live W… <NA>

8 "3/15/12 9:18" "R_4PEbT4… "Tea… "Ele… "Infor… "Online… <NA>

9 "3/15/12 10:54" "R_eqB1bM… "Sch… "Mid… "Guida… "Live W… <NA>

10 "3/15/12 14:20" "R_ewwUwi… "Cen… "Pre… "Other… "Wiki" <NA>

# ℹ 57,044 more rows

# ℹ 12 more variables: Resource_9_TEXT <chr>, Resource_10_TEXT...9 <chr>,

# Topic <chr>, Resource_10_TEXT...11 <chr>, Q16...12 <chr>, Q16_9_TEXT <chr>,

# Q19 <chr>, Q20 <chr>, Q21 <chr>, Q26 <chr>, Q37 <chr>, Q8 <chr># view your data frame transposed so your can see every column and the first few entries

glimpse(opd_survey) Rows: 57,054

Columns: 19

$ RecordedDate <chr> "Recorded Date", "{\"ImportId\":\"recordedDate\"…

$ ResponseId <chr> "Response ID", "{\"ImportId\":\"_recordId\"}", "…

$ Role <chr> "What is your role within your school district o…

$ Q14 <chr> "Please select the school level(s) you work with…

$ Q16...5 <chr> "Which content area(s) do you specialize in? (S…

$ Resource <chr> "Please indicate the online professional develop…

$ Resource_8_TEXT <chr> "Please indicate the online professional develop…

$ Resource_9_TEXT <chr> "Please indicate the online professional develop…

$ Resource_10_TEXT...9 <chr> "Please indicate the online professional develop…

$ Topic <chr> "What was the primary focus of the webinar you a…

$ Resource_10_TEXT...11 <chr> "What was the primary focus of the webinar you a…

$ Q16...12 <chr> "Which primary content area(s) did the webinar a…

$ Q16_9_TEXT <chr> "Which primary content area(s) did the webinar a…

$ Q19 <chr> "Please specify the online learning module you a…

$ Q20 <chr> "How are you using this resource?", "{\"ImportId…

$ Q21 <chr> "What was the most beneficial/valuable aspect of…

$ Q26 <chr> "What recommendations do you have for improving …

$ Q37 <chr> "What recommendations do you have for making thi…

$ Q8 <chr> "Which of the following best describe(s) how you…# A tibble: 6 × 19

RecordedDate ResponseId Role Q14 Q16...5 Resource Resource_8_TEXT

<chr> <chr> <chr> <chr> <chr> <chr> <chr>

1 "Recorded Date" "Response… "Wha… "Ple… "Which… "Please… "Please indica…

2 "{\"ImportId\":\"reco… "{\"Impor… "{\"… "{\"… "{\"Im… "{\"Imp… "{\"ImportId\"…

3 "3/14/12 12:41" "R_6fKCyE… "Cen… "K-1… "Eleme… "Summer… <NA>

4 "3/14/12 13:31" "R_09rHle… "Cen… "Ele… "Eleme… "Online… <NA>

5 "3/14/12 14:51" "R_1BlKt2… "Sch… "Hig… "Not A… "Online… <NA>

6 "3/14/12 15:02" "R_bPGUVT… "Sch… "Ele… "Guida… "Calend… <NA>

# ℹ 12 more variables: Resource_9_TEXT <chr>, Resource_10_TEXT...9 <chr>,

# Topic <chr>, Resource_10_TEXT...11 <chr>, Q16...12 <chr>, Q16_9_TEXT <chr>,

# Q19 <chr>, Q20 <chr>, Q21 <chr>, Q26 <chr>, Q37 <chr>, Q8 <chr># A tibble: 6 × 19

RecordedDate ResponseId Role Q14 Q16...5 Resource Resource_8_TEXT

<chr> <chr> <chr> <chr> <chr> <chr> <chr>

1 7/2/13 10:20 R_0cggNPIobej2kHX Teacher Middl… World … Online … <NA>

2 7/2/13 12:32 R_bpZ2jPQV1BOta2p <NA> <NA> <NA> <NA> <NA>

3 7/2/13 12:32 R_4SbNuxFI6qv8pQF Teacher Eleme… Mathem… Online … <NA>

4 7/2/13 12:32 R_1TT9rRNolK2xSDP <NA> <NA> <NA> <NA> <NA>

5 7/2/13 12:32 R_8raUHcIydALnRhH <NA> <NA> <NA> <NA> <NA>

6 7/2/13 12:32 R_2bs3lzLBdGWjkOx <NA> <NA> <NA> <NA> <NA>

# ℹ 12 more variables: Resource_9_TEXT <chr>, Resource_10_TEXT...9 <chr>,

# Topic <chr>, Resource_10_TEXT...11 <chr>, Q16...12 <chr>, Q16_9_TEXT <chr>,

# Q19 <chr>, Q20 <chr>, Q21 <chr>, Q26 <chr>, Q37 <chr>, Q8 <chr> [1] "RecordedDate" "ResponseId" "Role"

[4] "Q14" "Q16...5" "Resource"

[7] "Resource_8_TEXT" "Resource_9_TEXT" "Resource_10_TEXT...9"

[10] "Topic" "Resource_10_TEXT...11" "Q16...12"

[13] "Q16_9_TEXT" "Q19" "Q20"

[16] "Q21" "Q26" "Q37"

[19] "Q8" Reducing Data

dplyr functions

select()picks variables based on their names.slice()lets you select, remove, and duplicate rows.rename()changes the names of individual variables using new_name = old_name syntaxfilter()picks cases, or rows, based on their values in a specified column

tidyr functions

drop_na()removes rows with missing values (“na”, “N/A”, etc.)

Subset Columns

- To help address our research question, let’s first reduce our dataset to only the columns needed

select()is adplyrfunction that can choose specific variables, or columns, of data

select()the columnsRole,Resource, andQ21Assign these selected columns to a new data frame named

opd_selectedby using the<-assignment operator

#select relevant columns

opd_selected <- select(opd_survey, Role, Resource, Q21)

#check data

head(opd_selected)# A tibble: 6 × 3

Role Resource Q21

<chr> <chr> <chr>

1 "What is your role within your school district or organization… "Please… "Wha…

2 "{\"ImportId\":\"QID2\"}" "{\"Imp… "{\"…

3 "Central Office Staff (e.g. Superintendents, Tech Director, Cu… "Summer… <NA>

4 "Central Office Staff (e.g. Superintendents, Tech Director, Cu… "Online… "Glo…

5 "School Support Staff (e.g. Counselors, Technology Facilitator… "Online… <NA>

6 "School Support Staff (e.g. Counselors, Technology Facilitator… "Calend… "com…Rename Columns

rename()allows us to change the names of existing columns, which can make them more useful or informative in our data workflow

- Take our

opd_selecteddata frame and userename(), along with the=assignment operator, to change the column name fromQ21totextand save it asopd_renamed.

# A tibble: 57,054 × 3

Role Resource text

<chr> <chr> <chr>

1 "What is your role within your school district or organizatio… "Please… "Wha…

2 "{\"ImportId\":\"QID2\"}" "{\"Imp… "{\"…

3 "Central Office Staff (e.g. Superintendents, Tech Director, C… "Summer… <NA>

4 "Central Office Staff (e.g. Superintendents, Tech Director, C… "Online… "Glo…

5 "School Support Staff (e.g. Counselors, Technology Facilitato… "Online… <NA>

6 "School Support Staff (e.g. Counselors, Technology Facilitato… "Calend… "com…

7 "Teacher" "Live W… "lev…

8 "Teacher" "Online… "Non…

9 "School Support Staff (e.g. Counselors, Technology Facilitato… "Live W… "awa…

10 "Central Office Staff (e.g. Superintendents, Tech Director, C… "Wiki" "Inf…

# ℹ 57,044 more rowsAdditional Wrangling

The following code shows how to make some slight adjustments to account for Qualtrics artifacts and missing data.

These are shown in greater detail in the Module 1 Case Study.

#use slice() to remove the top two rows of data

opd_sliced <- slice(opd_renamed, -1, -2)

#use drop_na() to remove missing data indicated by "NA"

opd_complete <- drop_na(opd_sliced)

#use filter() to identify only responses from participants who indicated their role as teacher

opd_teacher <- filter(opd_complete, Role == "Teacher")

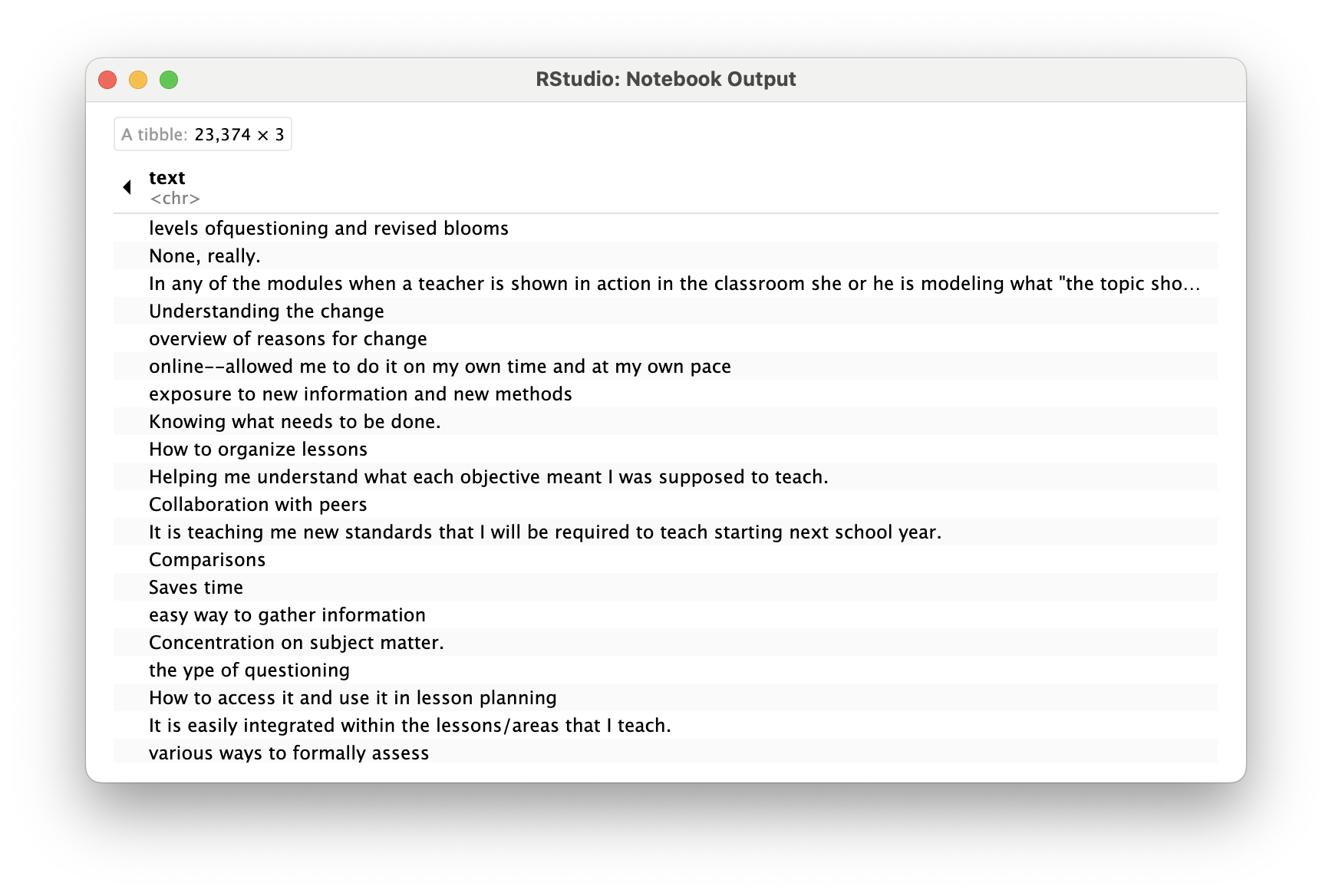

opd_teacher# A tibble: 23,374 × 3

Role Resource text

<chr> <chr> <chr>

1 Teacher Live Webinar "levels ofquestioning and revise…

2 Teacher Online Learning Module "None, really."

3 Teacher Online Learning Module "In any of the modules when a te…

4 Teacher Online Learning Module "Understanding the change"

5 Teacher Online Learning Module "overview of reasons for change"

6 Teacher Online Learning Module "online--allowed me to do it on …

7 Teacher Summer Institute/RESA Presentations "exposure to new information and…

8 Teacher Summer Institute/RESA Presentations "Knowing what needs to be done."

9 Teacher Other, please specify "How to organize lessons"

10 Teacher Document, please specify "Helping me understand what each…

# ℹ 23,364 more rowsTidying Text

For this part of our workflow we focus on the following functions from the tidytext and dplyr packages respectively:

unnest_tokens()splits a column into tokens.anti_join()returns all rows from x without a match in y.

Tidy data has a specific structure:

- Each variable is a column.

- Each observation is a row.

- Each type of observational unit is a table.

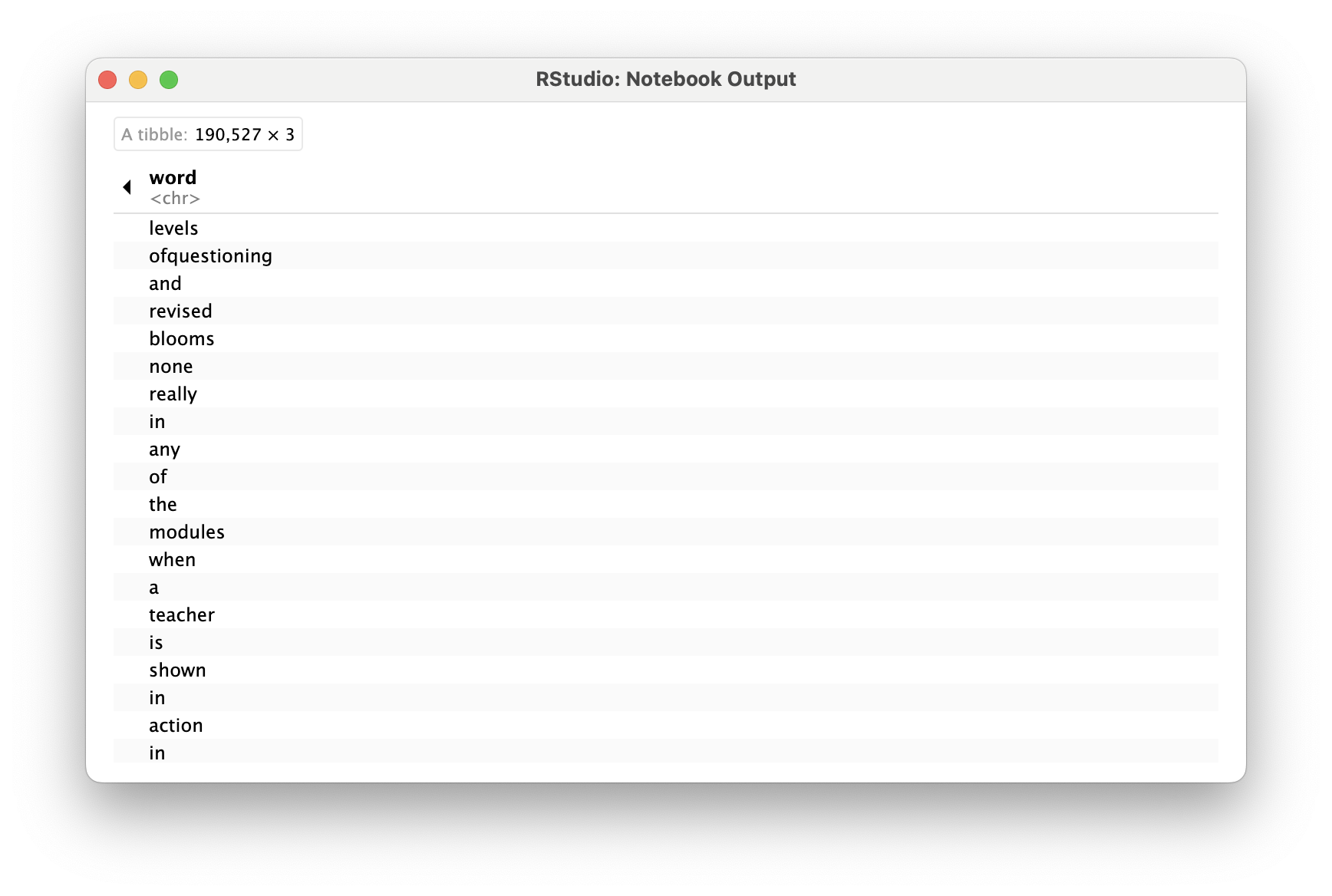

unnest_tokens()

The unnest_tokens() function transforms our opd_teacher text data from this…

… to this!

Use

unnest_tokens()to tokenizeopd_teacherinto new objectopd_tidyWithin the function, follow the table

opd_teacherwith theoutputandinputarguments aswordandtext, respectively

Remove Stop Words

One final step in tidying our text is to remove words that don’t add much value, such as “and”, “the”, “of”, “to” etc.

The

tidytextpackage contains astop_wordsobject, which is a list of generally agreed-upon “not useful” tokens, which we can use as a filter.

Here are some of those words:

# A tibble: 20 × 2

word lexicon

<chr> <chr>

1 a SMART

2 a's SMART

3 able SMART

4 about SMART

5 above SMART

6 according SMART

7 accordingly SMART

8 across SMART

9 actually SMART

10 after SMART

11 afterwards SMART

12 again SMART

13 against SMART

14 ain't SMART

15 all SMART

16 allow SMART

17 allows SMART

18 almost SMART

19 alone SMART

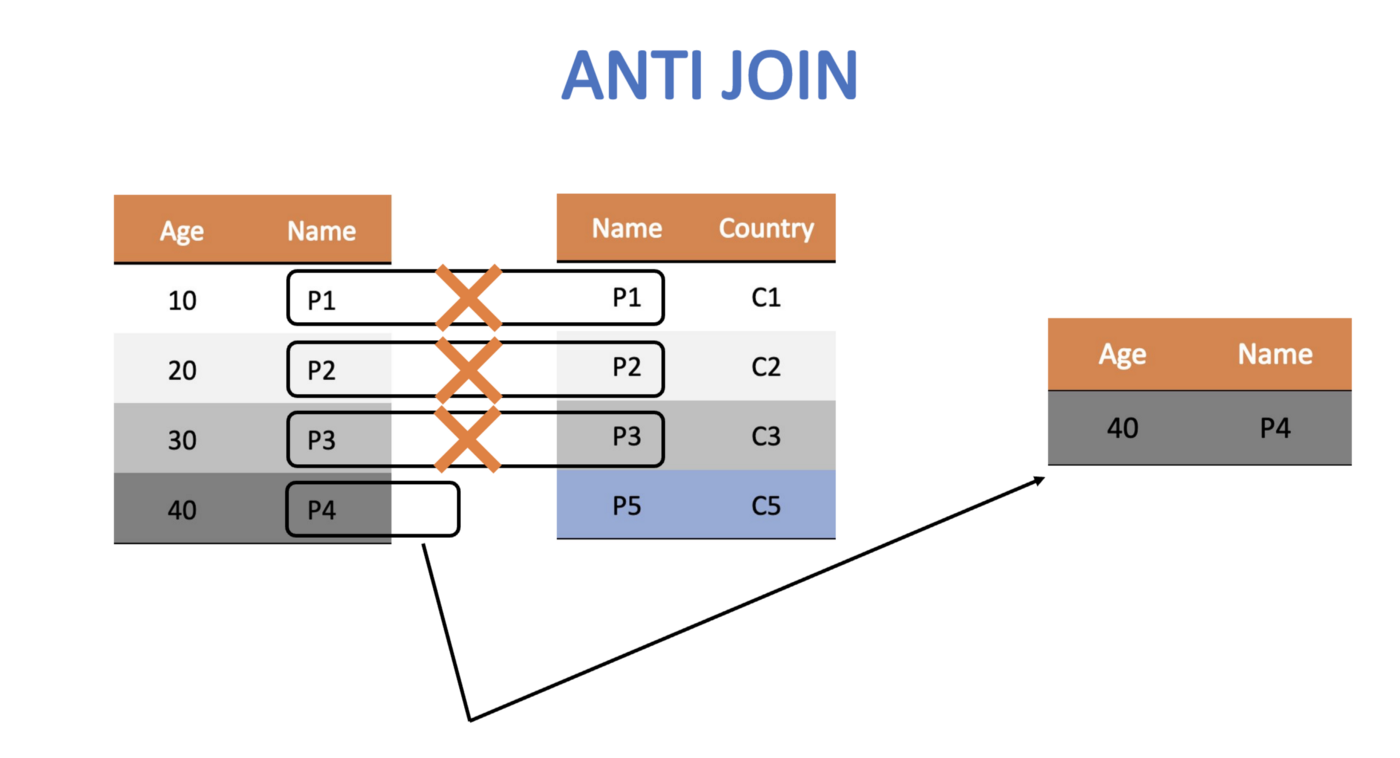

20 along SMART anti_join() looks for matching values in a specific column from two datasets and returns rows from the original dataset that have no matches.

- Use

anti_jointo remove the rows fromopd_tidythat contain matches in thewordcolumn with those in thestop_wordsdataset - Save it as

opd_clean, since this is the final step in cleaning for this exercise

# A tibble: 78,675 × 3

Role Resource word

<chr> <chr> <chr>

1 Teacher Live Webinar levels

2 Teacher Live Webinar ofquestioning

3 Teacher Live Webinar revised

4 Teacher Live Webinar blooms

5 Teacher Online Learning Module modules

6 Teacher Online Learning Module teacher

7 Teacher Online Learning Module shown

8 Teacher Online Learning Module action

9 Teacher Online Learning Module classroom

10 Teacher Online Learning Module modeling

# ℹ 78,665 more rowsWhat’s Next?

- Explore the R Basics column of Posit Cloud’s Recipes

- Complete the Module 1 case study

- Complete the Foundations of Learning Analytics badge

- Do essential readings for the next module